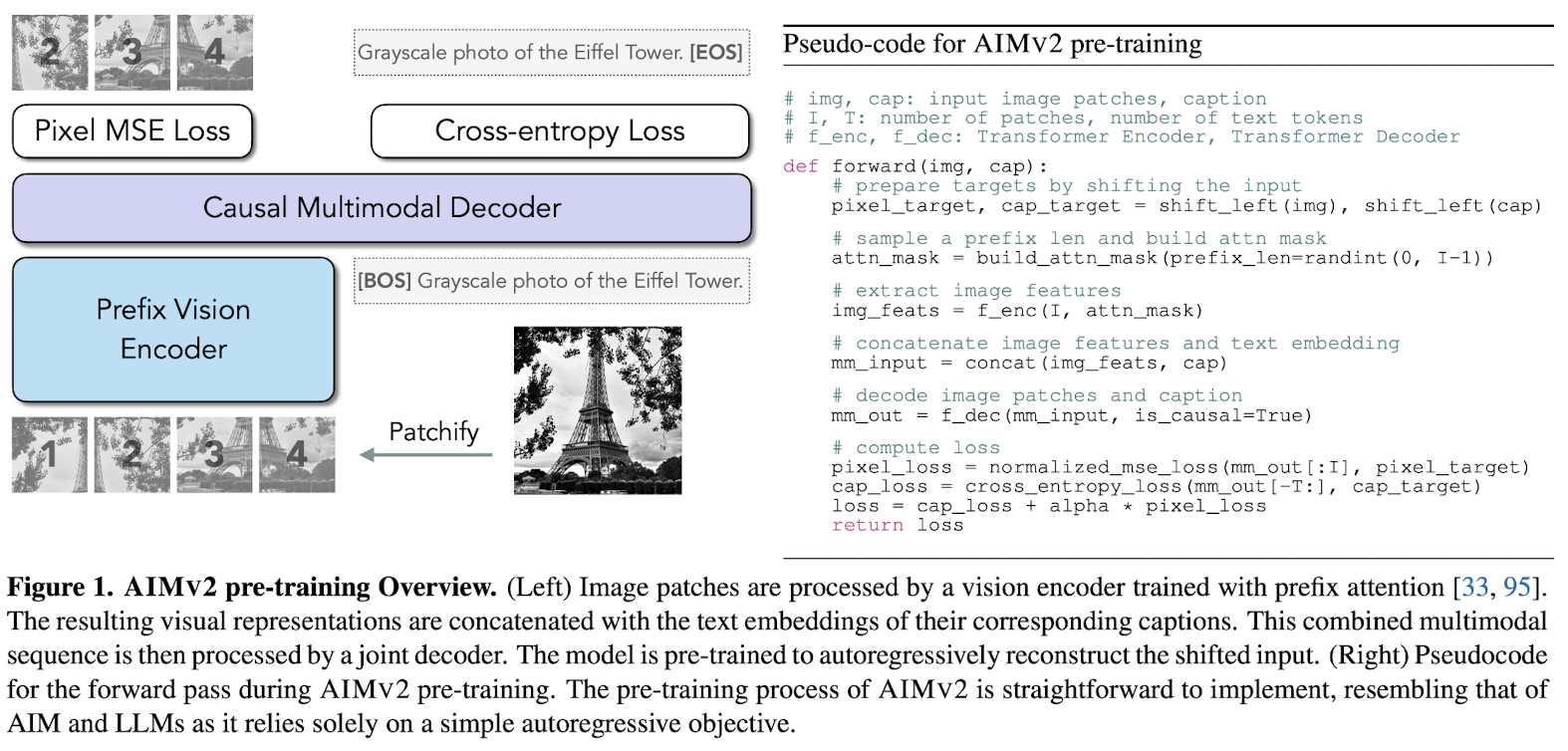

A novel method for pre-training of large-scale vision encoders, based on autoregressive pretraining to a multimodal setting(image and text), 把自回归预训练扩展到多模态(图像+文本)场景.

The method is to pair the vision encoder with a multimodal decoder that autoregressively generates raw image patches and text tokens. 方法是:用多模态解码器与视觉编码器配对,让解码器自回归地生成原始图像 patch 和文本 token。

AIMv2 is a family of open vision models pretrained to autoregressively generate both image patches and text tokens. During pretraining, AIMv2 uses a causal multimodal decoder that first regresses image patches and then decodes text tokens in an autoregressive manner.

Approach

Pretraining

- an image is partitioned into non-overlapping patches , forming a sequence of tokens.

- a text sequence is broken down into subwords .

- concatenate image tokens and text tokens(image+text or text+image都可以,但是选择 image+text,这样文本 token 在因果掩码下能看到全部已生成的图像 patch,从而更强地以视觉为条件,有利于训练出更强的视觉编码器;同时像素重建先发生在图像段,避免让图像过度依赖文本提示再去“补图”)

The sequence is thus:

making the model to autoregressively predict the next token in the sequence.

The pretraining setup:

- a dedicated vision encoder that processes the raw image patches

- then passed to a multimodal decoder alongside the embedded text tokens

- the decoder subsequently performs next-token prediction on the combined sequence

- vision encoder: prefix self-attention; multimodal decoder: causal self-attention

The loss function is designed separately for image and text domains:

The overall objective is to minimize w.r.t. model param . Normalize the images patches following He.

Use separate linear layers to map the final hidden state of the multimodal decoder to the appropriate output dimensions for image patches and vocabulary size for vision and language, respectively.

Architecture

The vision encoder is ViT.

Prefix Attention Randomly sample the prefix length as . The pixel loss is computed exclusively for non-prefix patches, defined as .

- used in vision encoder

- facilitates the use of bidirectional attention during inference without additional tuning

SwiGLU and RMSNorm use SwiGLU as FFN and replace all norm layers with RMSNorm in both vision encoder and multimodal decoder

Multimodal Decoder

- Image features and raw text tokens are each linearly projected and embedded into .

- decoder employs causal attention in the self-attention operations

- the outputs are processed through 2 separated linear heads to predict the next token in each modality

Post-Training

High-resolution Adaptation